Machine learning promises to make drug discovery faster, better and cheaper, but it requires access to vast datasets of molecules and their properties. While every pharma company can apply machine learning algorithms to their own data, the true power of this technology comes from combining the datasets of several companies to fuel the algorithms. However, these are the exact data upon which pharma’s future profits depend, and are therefore ultra-confidential. How can they be pooled to speed up drug discovery?

The MELLODDY project is working on a way to make the most of the combined power of these highly valuable datasets without sharing them, exposing them, or even moving them from where they’re housed.

Drug discovery basics

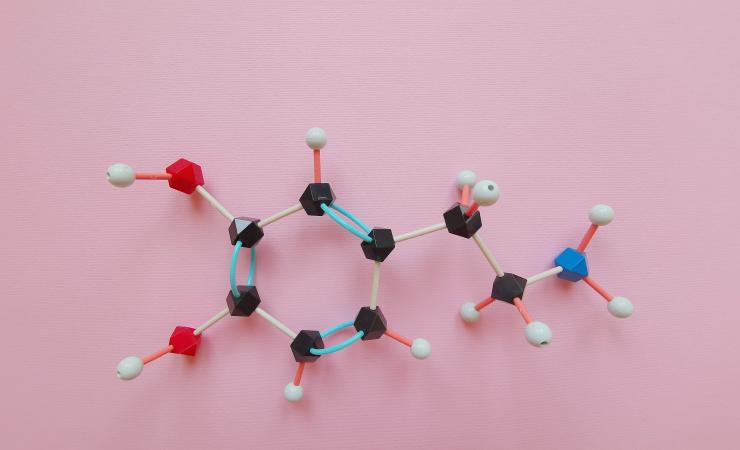

Figuring out which chemicals could be turned into medicines is no easy task. It requires trawling vast libraries of chemical compounds to spot those that look like they might have a beneficial effect on a target in the human body. If a biological target, like a protein or an enzyme, behaves in a certain way when interacting with a particular compound (in a way that would relieve a symptom in its human owner, say), then that compound would be flagged as a good lead for further investigation.

Whether or not a chemical is a good candidate is not only a question of its effects on a given biological target; it’s important to know how it will interact with other molecules and any potential side effects. It’s a job best left to machines, and high-throughput screening is an automated process that involves robotic arms carrying out thousands or even millions of rapid tests using biological samples (read about IMI initiative the European Lead Factory/ESCulab compound library and screening centre).

Competitive data sharing

Machine learning, a type of artificial intelligence, promises to speed up different steps in the complex drug-discovery journey, including this early screening process. Pharma companies have a lot of chemical and biological data stored on their servers, and machine learning excels at recognising patterns in this kind of data. Algorithms can be programmed to look for certain patterns, learn from previous observations and become better and better at predicting what makes for a good drug target. The more data you feed it, the more it learns. If pharma companies could pool their respective troves of data, the algorithm would supercharge its predictive power and help make crucial decisions.

This proprietary data is too valuable for the companies to risk sharing, however, so the MELLODDY project is testing a workaround using a technique called federated learning; rather than uploading all the datasets in one spot like in traditional centralised machine learning techniques, federated learning allows all of these datasets to remain behind their firewall, stored independently from each other. Using the datasets as kind of training manuals, machine learning techniques are used across the decentralised servers without actually exchanging or moving data. With this method, algorithms go back and forth between subsets of each company’s data and the central server, which prevents anyone from knowing which companies data adds to the central model. This exposes the algorithm to a much wider range of data than any one company has in-house. All this is done while keeping sensitive data safely ensconced within each company’s own infrastructure.

A first successful run

The project says it has brought together the world’s largest collection of small molecules with known biochemical or cellular activity, with over a billion data points from the datasets of 10 pharmaceutical companies. This includes not only chemical libraries but also hundreds of terabytes of imaging data. The pharma companies will be able to launch queries about specific compounds that can be sent to each organisation’s data repositories to look for potential matches, and use whatever predictions emerge during the project. Blockchain technology will be used to log all the activities to make sure data has not been improperly accessed. The technology, developed jointly by tech and academic partners, enables competing pharma companies to collaborate in a novel way (read more about the research partners).

Earlier this year, MELLODDY announced that it had managed to carry out the first successful federated learning run using this new predictive modelling platform. Janssen’s Hugo Ceulemans, MELLODDY project leader, said, “We now have an operational platform, rigorously vetted by the consortium’s 10 pharmaceutical partners, found to be secure to host their data – an enormous accomplishment. Over the next year we’ll turn our focus on studying the hypothesis that multi-partnered modeling will yield superior predictive models for drug discovery.”